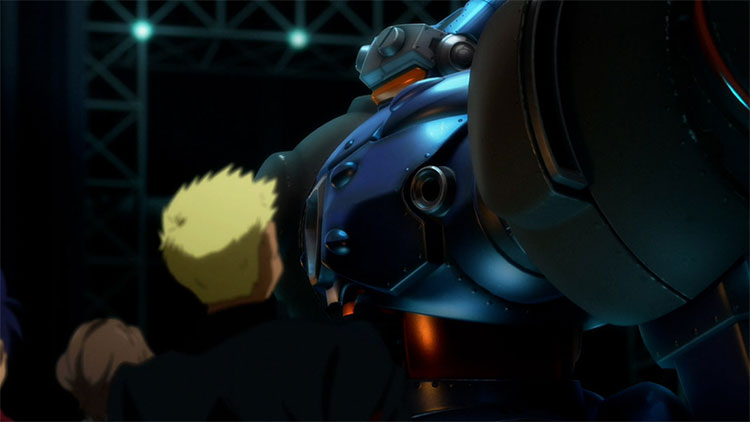

You will primarily take on the role of Yamato as you experience the game's full voice-acting, impactful battles presented through animation and 3DCG alike, and grand, sweeping story told from many disparate points of view.

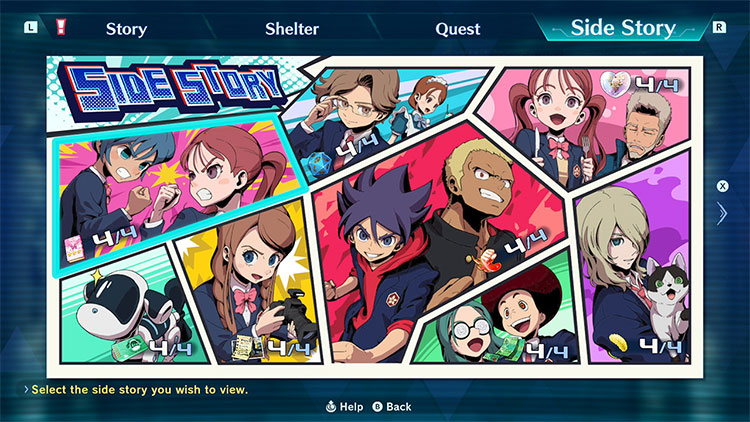

Look forward to scenes not featured in the anime and a sub-story that reveals a whole new side to the characters.